MathConstraint benchmark reveals reasoning wall: GPT-5.5 plummets to 67% on verified combinatorial problems

New adaptive benchmark uses constraint satisfaction problems with automated verification to test LLM reasoning, showing frontier models achieve 18.5–87.6% accuracy depending on difficulty tier and tool access.

Frontier language models hit a hard ceiling on combinatorial reasoning when problems are automatically verified and difficulty adapts to model capability, according to MathConstraint, a new benchmark released on arXiv this week.

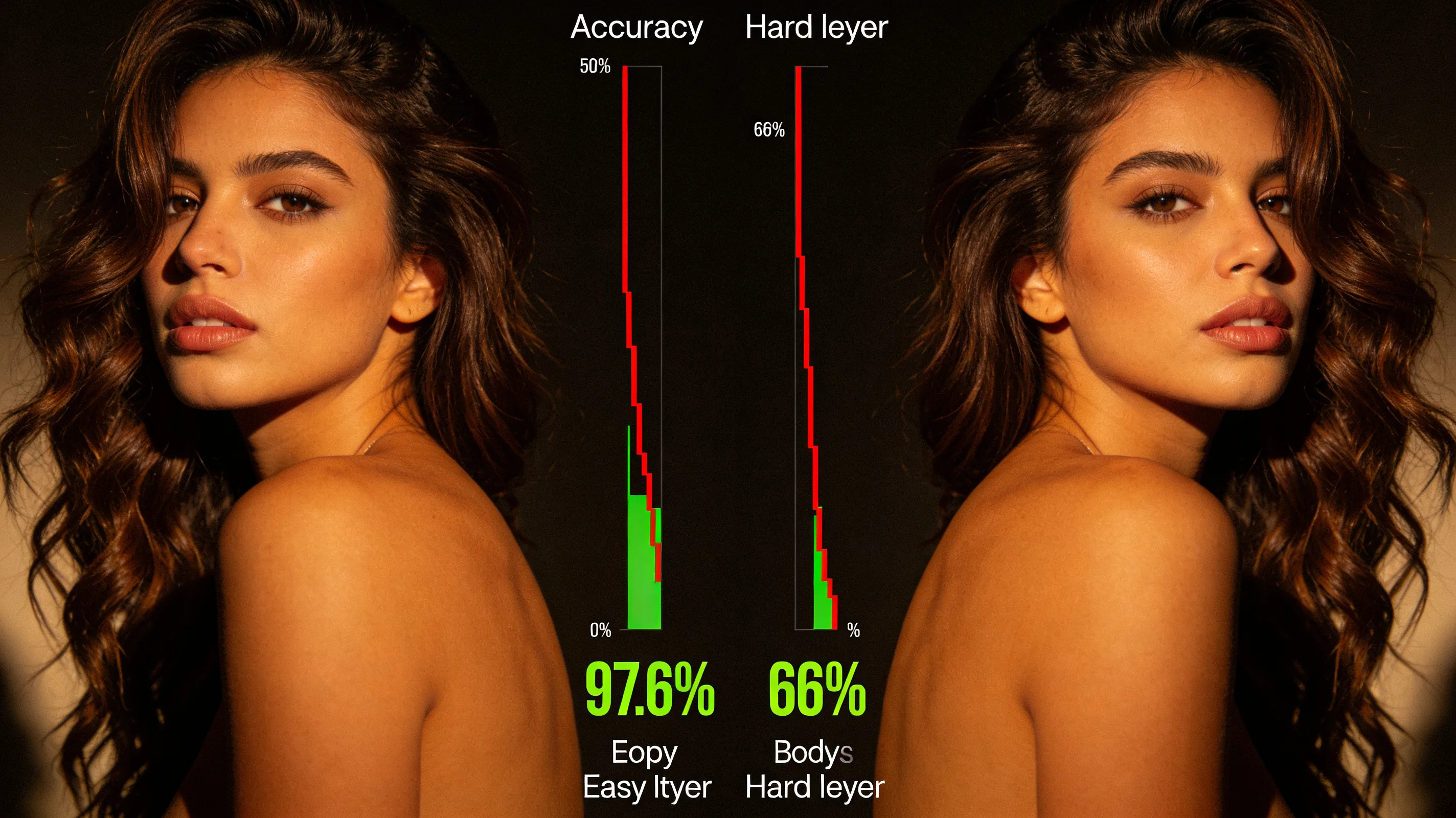

GPT-5.5 accuracy collapsed from 87.6% on easier instances to 66.9% on the full benchmark set. MathConstraint combines constraint satisfaction problems with solver-based verification to create instances that remain challenging as models improve. The benchmark includes two tiers: MathConstraint-Easy (266 instances) where frontier models score between 72.6% (Gemini-3.1-flash-lite) and 87.6% (GPT-5.5), and the full MathConstraint set (329 instances) where the same models drop to between 18.5% (Claude-4.6-sonnet) and 66.9% (GPT-5.5). Unlike existing benchmarks that saturate on fixed datasets or rely on LLM-as-judge scoring, MathConstraint uses parameterized problem types that enable scalable generation of arbitrarily difficult instances, each verified by SAT/SMT solvers.

Researchers evaluated 12 frontier and open-weight models with and without access to a sandboxed Python environment containing generic constraint solvers. Tool access roughly doubled frontier accuracy on the full set, adding a mean of 28 percentage points and up to 52 points for Claude-4.6-sonnet. Tool-call budget proved equally revealing: halving the allowed rounds from eight to four erased up to 37 percentage points of accuracy—a dependency that most single-budget benchmarks miss entirely. The generator, dataset, and evaluation harness are released as an environment for studying combinatorial reasoning and tool-use behavior under adversarially tunable difficulty.