Quantization attack injects outliers to trigger hidden malicious behavior in LLMs

Researchers demonstrate the first quantization-conditioned attack that works across advanced compression schemes by injecting outliers that force targeted weight blocks to collapse to zero.

A new attack vector exploits a shared vulnerability in popular LLM quantization methods—including AWQ, GPTQ, and GGUF I-quants—by injecting outliers that cause surrounding weights to round to zero. Released May 15 on arXiv, the technique allows an adversary to release a model that appears benign at full precision but exhibits malicious behavior once users apply quantization for deployment.

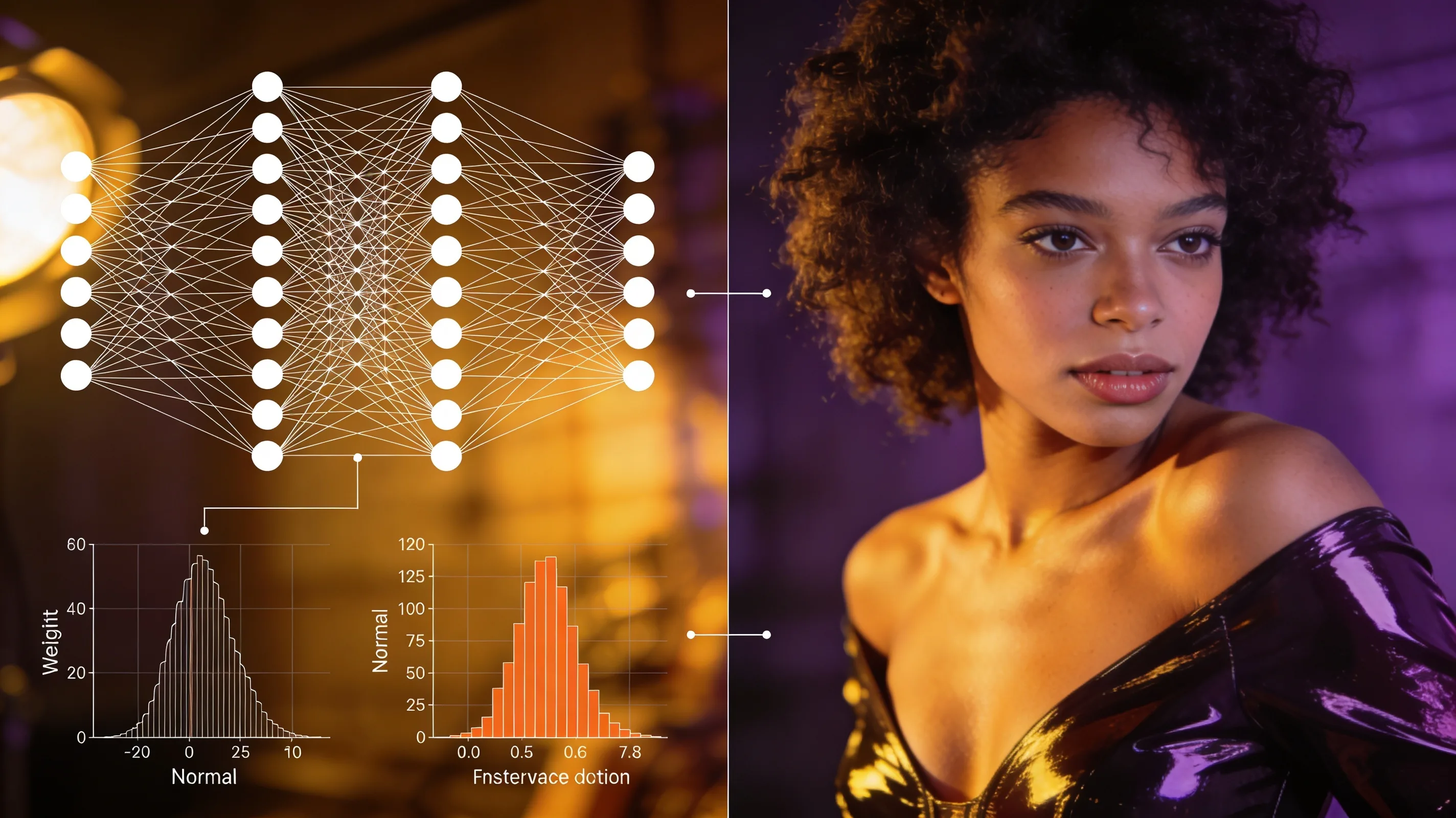

The attack works by inserting large outlier values into specific weight blocks. Many modern quantization methods share a property: when a block contains a large outlier, the quantization algorithm scales down other weights in that block, often rounding them to zero. By strategically placing outliers, an attacker can induce predictable weight collapse in targeted layers, enabling a range of malicious behaviors—from biased outputs to backdoored responses—that activate only after quantization. Prior quantization-conditioned attacks failed against these sophisticated schemes; this is the first to succeed broadly across them.

Testing across three attack scenarios and multiple LLMs, the researchers achieved high success rates where earlier techniques had consistently failed. The findings expand the known security surface of quantization beyond simpler schemes. Quantization has become standard practice for memory-efficient deployment, but the research shows that even complex, widely adopted methods carry exploitable risks.