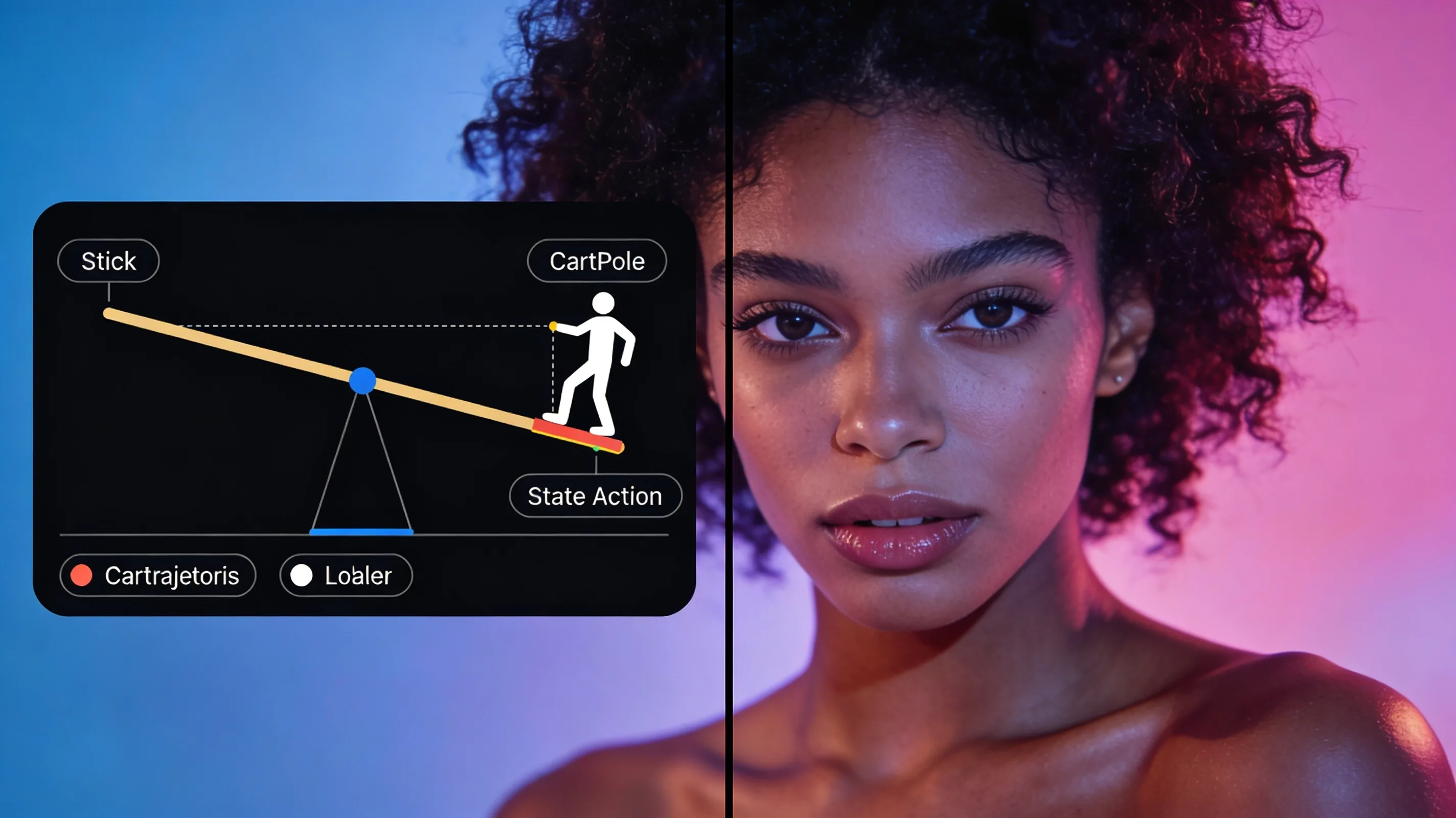

R2PO framework cuts CartPole training to ~500 episodes with trajectory-level LLM critique

Reflective Prompted Policy Optimization uses a two-stage LLM design that inspects full rollout trajectories instead of scalar rewards, achieving near-optimal CartPole performance in ~500 episodes and outperforming deep RL across ten environments.

Reflective Prompted Policy Optimization (R2PO) is a two-stage LLM framework that treats full trajectories—states, actions, and rewards—as first-class evidence for policy search, rather than collapsing them into scalar returns. A Search-LLM proposes candidate policy parameters, the environment executes them, and a Critic-LLM inspects the resulting rollouts to propose targeted revisions grounded in observed behavior. Across ten environments, R2PO using a 20B open-weight model achieves the highest mean best reward and reaches near-optimal CartPole performance in roughly 500 episodes, substantially faster than both deep RL and prior LLM-based methods.

The paper identifies a failure mode called salience bias: when the Critic-LLM sees multiple rollouts, it fixates on improving a single failure even when most trajectories succeed. In a three-trajectory variant showing the best, worst, and median rollout, this behavior explains 76.6 percent of regressions on CartPole. R2PO mitigates the bias by reasoning over aggregate rollout statistics, median-trajectory selection, and a revision rule. Ablations show the gains require separating global search from behavior-grounded revision and using selection to filter high-variance edits.

The preprint (arXiv:2605.08315) demonstrates that even comparatively small LLMs can learn faster and diagnose more precisely when they inspect trajectories directly rather than reducing them to scalar returns.