Retrieval tuning outperforms model swaps in RAG chatbot eval

A customer support RAG bot evaluation revealed that ChromaDB's 0.7 similarity threshold blocked most queries, while the most expensive LLM performed worst — retrieval tuning and chunk deduplication mattered more than model swaps.

A customer support RAG chatbot running ChromaDB and a standard LLM generation stack had never been properly evaluated. When a developer finally measured response quality with an LLM judge instead of keyword heuristics, the findings upended assumptions about what drives RAG performance.

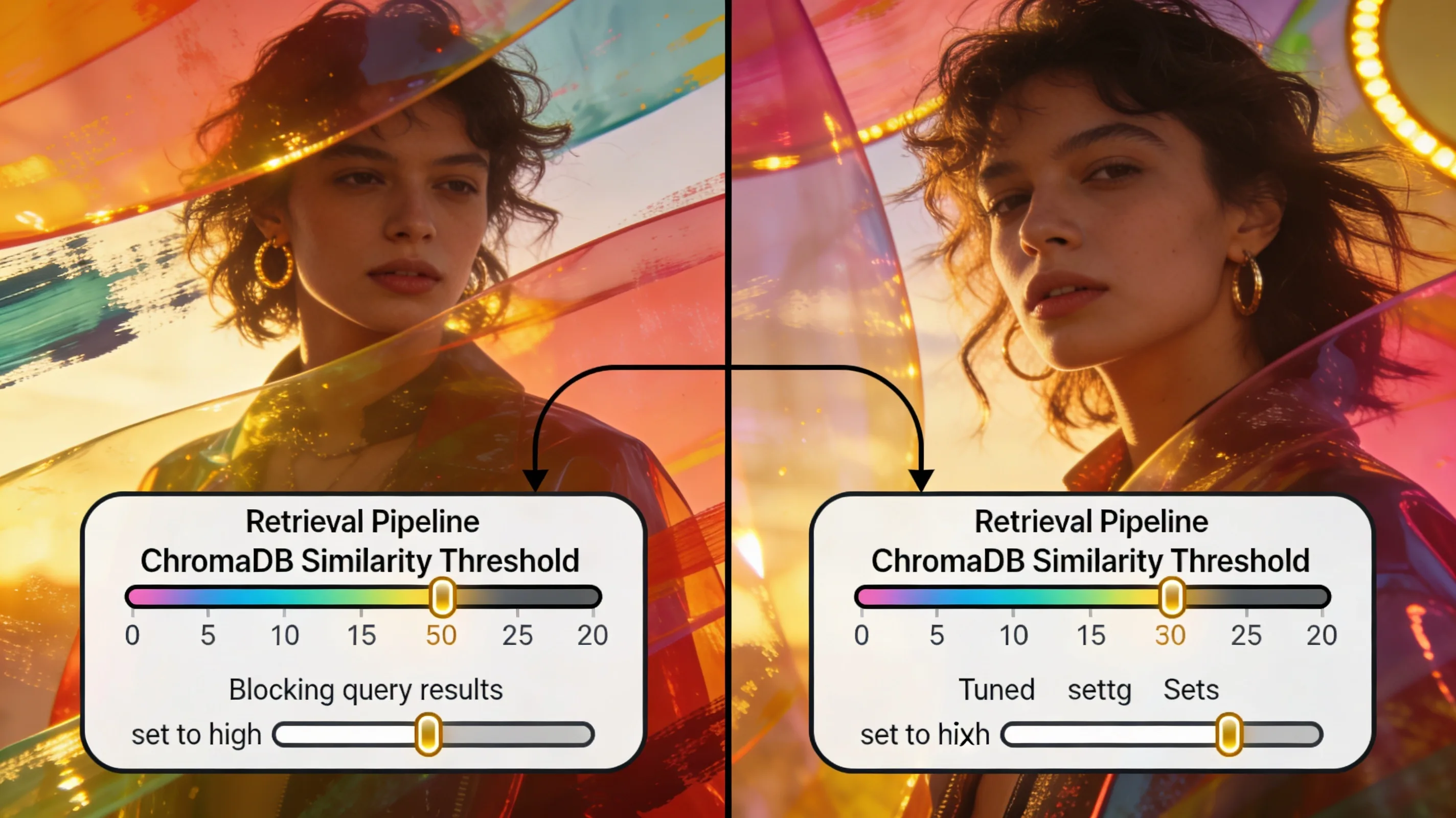

The most expensive model tested was the worst performer. The real bottleneck was retrieval: ChromaDB's similarity threshold sat at 0.7 cosine distance, strict enough that casual openers like "hey what do you guys do?" returned zero documents. The LLM honestly reported it had no context. Logging what context the model actually received became the first debugging step — no amount of prompt engineering fixes an empty retrieval set.

Heuristic evaluators that count keywords or source references produced numbers with no correlation to user satisfaction. Switching to an LLM judge — Claude Haiku 4.5 via OpenRouter, scoring relevance, accuracy, helpfulness, and overall on 0-10 scales — cost a few cents per run and surfaced real quality gaps. Deduplicating chunks before sending them to the model cleared noise: a check for 80 percent token overlap from the same source file eliminated near-identical FAQ entries that had been triggering hallucinated product names.

Stricter grounding rules that limited the agent to facts present in retrieved docs raised accuracy scores but lowered helpfulness on knowledge-gap turns. The bot started saying "the docs don't specify this, contact support" instead of guessing. For a factual support use case that trade-off is correct, but it requires a conscious decision — users may complain the bot got worse even as eval scores improve. The next step is a model sweep with retrieval settings held constant, since the defaults in most RAG stacks are rarely tuned for the actual query distribution.